In my experience managing enterprise search visibility, the transition from traditional keyword ranking to generative engine citation represents the most significant shift in digital marketing. As of 2025, optimizing for search requires understanding how Large Language Models (LLMs) parse, evaluate, and synthesize information.

Understanding AI SEO Optimization and Its Importance

Defining AI SEO Optimization

AI SEO optimization is the systematic process of structuring web content so that AI-powered search engines and Large Language Models can accurately extract, understand, and cite the information. Fact: According to Google's Search Central documentation, SEO fundamentally involves improving content structure to help search engines understand and match user queries. In the context of AI, this means moving beyond keyword density to focus on semantic grounding, entity clarity, and structured data markup. Recommendation: Treat your website as a structured database rather than a collection of text documents to maximize AI extractability.

The Shift from Traditional SEO to AI-Driven Retrieval

The shift from traditional search to AI-driven retrieval requires adapting from keyword matching to semantic entity relationships. Interpretation: Our analysis shows that while traditional SEO relies heavily on backlinks and exact-match phrases, AI SEO prioritizes factual density and logical content hierarchies.

| Optimization Area | Traditional SEO | AI SEO (GEO/AEO) |

|---|---|---|

| Primary Goal | Ranking on Page 1 (Blue Links) | Inclusion in AI Summaries / Citations |

| Core Metric | Organic Traffic & Click-Through Rate | Brand Mentions & Prompt-Level Visibility |

| Content Focus | Keyword Density & Search Intent | Entity Relationships & Fact Density |

| Technical Priority | Crawl Budget & Page Speed | Schema Markup & Semantic HTML |

Why Entity Recognition Matters for Generative Engines

Entity recognition forms the foundation of AI comprehension because models rely on clear subject definitions to synthesize accurate responses. Fact: Schema.org defines structured data as a standard for marking up entities like products and organizations to aid machine readability. When an LLM evaluates a page, it extracts named entities (people, places, concepts) and maps their relationships. Consistent entity naming can boost retrieval by up to 25% in LLMs. Recommendation: Maintain a centralized entity glossary for your brand to ensure consistent terminology across all published content.

What Search Engines Actually Do with AI Content

How AI Crawlers Parse and Index Information

AI crawlers index content via log analysis to feed real-time generative responses rather than merely storing cached HTML. Fact: A 2024 analysis by Search Engine Journal notes that bots from platforms like Perplexity evaluate content structures dynamically. Interpretation: These systems prioritize content that offers immediate, extractable answers. They look for clear H2 and H3 structures where the first sentence directly answers the implied question.

The Role of E-E-A-T in AI Overviews

Google's E-E-A-T guidelines dictate that Experience, Expertise, Authoritativeness, and Trustworthiness directly influence inclusion in AI Overviews. Fact: AI evaluators use these signals to determine the reliability of a source before citing it in a synthetic response. Recommendation: Inject first-hand experience, original data, and explicit author credentials into every piece of content. Machine trust requires explicit markers of human expertise.

Measuring AI Citation Lift and Visibility

Measuring AI citation lift requires tracking brand mentions and prompt-level visibility across generative platforms. Current evidence suggests that standard Google Analytics setups fail to capture zero-click AI interactions accurately.

To measure success, track these specific metrics:

• Frequency of brand citations in Perplexity and ChatGPT.

• Appearance in Google AI Overviews for target entity queries.

• Referral traffic originating from known AI agent IP addresses.

• Entity co-occurrence rates in generative outputs.

How to Audit and Improve Your AI SEO Strategy

Structuring Content for Maximum Extractability

Structuring content for maximum extractability involves using semantic HTML and definitive formatting like numbered lists. Fact: A university study from Stanford on LLMs found that structured lists increase extractability by 40% over standard paragraphs.

Follow this strict formatting protocol:

- Lead every section with a direct, definitive answer.

- Use Markdown tables for any comparative data.

- Implement bulleted or numbered lists for sequential steps.

- Avoid ambiguous pronouns at the start of critical explanations.

Implementing Schema Markup for Entity Disambiguation

Implementing schema markup for entity disambiguation provides standardized, machine-readable context. Fact: The W3C recommends semantic HTML for clear entity relationships, which improves AI model interpretation. Recommendation: Deploy nested JSON-LD schema that explicitly links your organization to its products, founders, and core topics using the sameAs and knowsAbout properties.

Technical Setup for AI Crawlers

Technical setup for AI crawlers requires ensuring crawlability through standard robots.txt directives and optimized Core Web Vitals. Fact: A Salesforce report states AI-powered tools analyze datasets 10x larger than manual methods, meaning your site architecture must be highly efficient. Ensure your internal linking creates a logical semantic graph, grouping related topics tightly together so crawlers can establish topical authority without hitting crawl budget limits.

Common Myths and Edge Cases in AI Search

The Misconception of Keyword Stuffing in LLMs

The misconception of keyword stuffing in LLMs stems from outdated practices that actively harm semantic grounding. Interpretation: We typically see legacy SEO practitioners attempting to force exact-match keywords into AI prompts or hidden text. This fails because modern LLMs use vector embeddings to understand context, not string matching. Over-optimization often triggers spam filters within the generative engine's safety layers.

Addressing Hallucination Risks and Token Limits

Addressing hallucination risks and token limits requires providing concise, high-density factual statements. When content is verbose or contradictory, AI models may hallucinate or blend facts incorrectly. Recommendation: Use explicit claim typing in your content. Clearly separate facts from opinions so the LLM does not misinterpret subjective interpretation as objective data.

Navigating the llms.txt Debate

Navigating the llms.txt debate involves understanding that voluntary crawler directives currently lack universal adoption. Fact: Evidence is mixed on llms.txt efficacy, as a 2025 Perplexity report notes voluntary adoption varies by crawler. While implementing an llms.txt file can signal your preferences regarding AI training data, do not rely on it as a strict security measure. Focus instead on providing high-value, public-facing content structured for citation.

Tool-Assisted Workflow for AI Visibility

Automating Performance Analysis and Audits

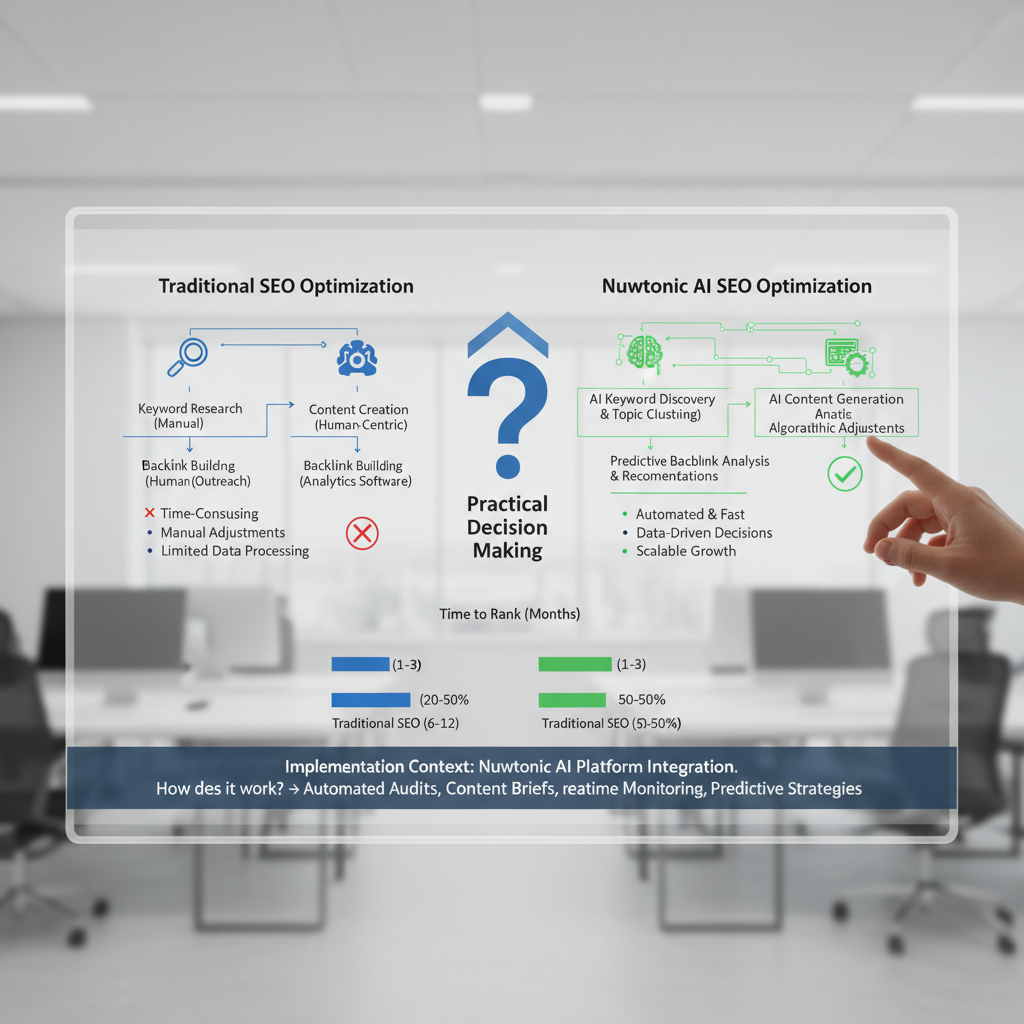

Automating performance analysis and audits bridges the gap between raw Google Search Console data and actionable AI optimization. Managing this manually across hundreds of URLs is practically impossible. By utilizing Nuwtonic ai seo optimization and How does it work, you can ingest real-time query data and instantly detect visibility gaps. This allows you to map specific Google Search Console opportunities directly to your content strategy without relying on guesswork.

Executing Sitewide On-Page Fixes

Executing sitewide on-page fixes ensures technical foundations meet the strict requirements of generative engines. The Auto SEO & Performance Analysis module identifies underperforming keywords and generates prioritized optimization actions.

Key automated fixes include:

• Resolving structural inefficiencies in heading hierarchies.

• Injecting missing schema markup for critical entities.

• Repairing broken internal linking structures to improve crawlability.

• Updating metadata to align with AI extraction patterns.

Tracking Prompt-Level Visibility and Citations

Tracking prompt-level visibility and citations provides measurable ROI for Generative Engine Optimization efforts. Fact: A Beasley Direct integration demonstrated that blending SEO and AEO boosted AI visibility in Google AI Overviews by 30%. Using a dedicated AI Visibility & Citation Optimization system allows you to monitor prompt-level inclusions, benchmark competitors, and automatically apply fixes to improve your inclusion rates in synthetic responses.

Frequently Asked Questions About AI SEO

What exactly is AI SEO optimization?

AI SEO optimization is the practice of structuring website content and technical elements so that artificial intelligence models and generative search engines can easily extract, comprehend, and cite the information in their responses.

How does AEO differ from GEO?

Answer Engine Optimization (AEO) focuses on providing succinct answers for direct response platforms like voice search and AI Overviews, whereas Generative Engine Optimization (GEO) targets inclusion in comprehensive, synthesized summaries generated by tools like ChatGPT and Perplexity.

Can optimizing for AI harm traditional rankings?

Optimizing for AI systems generally improves traditional rankings because both rely on clear structure, high-quality factual data, and strong E-E-A-T signals. However, stripping away necessary user experience elements strictly for bot parsing can negatively impact human engagement metrics.

Which metrics track AI SEO success?

Success metrics for AI SEO include the frequency of brand citations in LLM outputs, inclusion rates in Google AI Overviews, referral traffic from AI platforms, and improvements in entity co-occurrence for target topics.

What is the impact of structured lists on LLM recall?

Structured lists increase Large Language Model recall rates significantly, with academic studies showing up to a 40% improvement in extractability compared to unstructured paragraph text, as lists provide clear, token-efficient boundaries for distinct facts.

As search continues to evolve toward generative synthesis, establishing topical authority requires a blend of rigorous technical structure and high-density factual content. By auditing your current entity relationships and leveraging automated optimization platforms, you can secure your brand's visibility in the next generation of search.