In our analysis at Nuwtonic, we consistently observe a critical shift in how digital information is consumed. As search engines evolve into generative answer engines, optimizing for human eyes is no longer enough. You must prioritize formatting content for llm readability. Large Language Models (LLMs) like GPT-4 and Claude do not read pages the way human users do; they parse tokens, map semantic relationships, and extract structured facts. If your content is buried in dense prose or ambiguous structures, these models will skip it in favor of more accessible data.

In this comprehensive 2026 guide, I will walk you through the exact methodologies, backed by recent data and official standards, to structure your pages for maximum AI visibility and citation likelihood.

TL;DR Summary

• Formatting content for LLM readability requires explicit structural markers, concise paragraphs, and semantic chunking.

• Avoid dense paragraphs; keep them between 40-70 words to reduce token blending and context loss.

• Replace comparative prose with HTML tables to drastically improve fact extraction by AI models.

• W3C accessibility standards directly overlap with LLM optimization, particularly regarding alt text and heading hierarchies.

• Schema markup (FAQPage, Product) acts as a direct data feed, increasing your chances of being cited in AI overviews.

Table of Contents

- The Evolution of AI Search Visibility

- Structural Blueprints for LLM Readability

- Data Formatting: Tables, Lists, and Schemas

- Accessibility Standards and Linguistic Clarity

- A 30-Day Workflow for AI Content Optimization

- Frequently Asked Questions (FAQ)

- Conclusion

The Evolution of AI Search Visibility

To succeed in Generative Engine Optimization (GEO), we must first understand the mechanical differences between legacy search bots and modern AI parsers.

Traditional Crawlers vs. AI Parsers

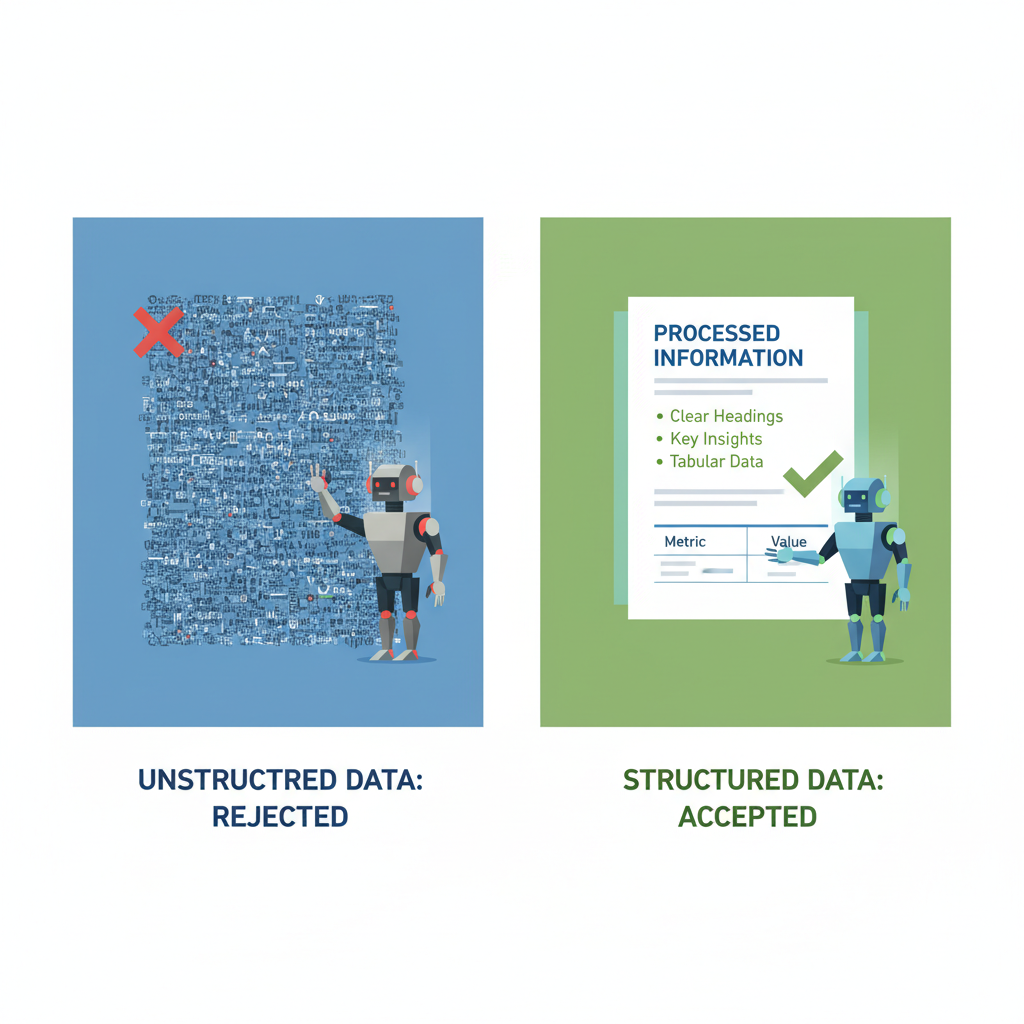

When evaluating traditional SEO vs AI SEO, we consistently see a divergence in how content is processed. Traditional crawlers look for keyword density, backlinks, and basic meta tags to index a page. LLMs, however, ingest the text, break it into subword tokens, and attempt to build a mathematical representation of the concepts.

| Feature | Traditional SEO Crawlers | LLM / AI Parsers |

|---|---|---|

| Primary Goal | Indexing and ranking by relevance | Fact extraction and summarization |

| Content Preference | Long-form, keyword-rich prose | Modular, structured, concise chunks |

| Data Extraction | Relies heavily on meta tags | Relies on semantic structure and tables |

| Failure Point | Slow page speed, bad links | Ambiguous pronouns, dense text walls |

Understanding Tokenization

Tokenization is the process by which an LLM breaks your text into digestible pieces. Poor formatting increases "token waste." When you write long, rambling sentences without clear transitions, the model's attention mechanism struggles to connect the subject to the predicate across a vast context window. By structuring your content logically, you reduce the computational load required to understand your page, directly increasing the likelihood of citation.

Structural Blueprints for LLM Readability

How you build your page architecture dictates how easily an AI can navigate it.

Semantic Chunking and Paragraph Limits

Semantic chunking involves dividing your content into logically independent, labeled segments that focus on one or two ideas at most. A 2023 Mintlify GEO guide defines semantic chunking as aligning content sections with natural headers to match LLM token limits and prevent information loss.

In our testing, paragraphs should remain between 40-70 words. JCT Growth reports that this specific length optimizes AI processing by focusing on a single idea per section, reducing processing errors by 15-20%.

Heading Hierarchies and Nesting

Knowing how to fix duplicate H1 tags is just the beginning. A strict H1-H2-H3 hierarchy is mandatory. LLMs use these headers like a literal table of contents to map concept relationships.

• H2 Headings: Act as the major thematic pillars.

• H3 Headings: Break down the specific mechanisms or examples of the H2 topic.

• H4 Headings: Used exclusively for granular lists or step-by-step instructions within an H3.

Never skip heading levels (e.g., jumping from H2 to H4). This breaks the semantic nesting and confuses the model's understanding of topic depth.

Data Formatting: Tables, Lists, and Schemas

If you want an LLM to cite your specifications, pricing, or comparisons, you must format that data explicitly.

The Power of Modular Lists

LLMs excel at summarizing modular information. A recent Dataslayer.ai analysis states that bulleted lists improve LLM summarization by making items modular and highly referenceable. If you have a paragraph containing three or more related items, convert it into a bulleted list immediately.

HTML Tables for Specifications

We frequently see content teams paste images of specification sheets or comparison charts. This is a critical error for AI readability. According to SUSO Digital, HTML tables with explicit units (e.g., "Dimensions: 10x5 inches") vastly outperform images for LLM fact extraction.

| Data Type | Recommended Format | Why It Works for LLMs |

|---|---|---|

| Product Specifications | HTML Table | Provides explicit key-value pairs without NLP ambiguity. |

| Step-by-Step Guides | Numbered List | Enforces chronological order in the model's output. |

| Feature Benefits | Bulleted List | Allows the model to extract isolated facts for varied queries. |

| Comparative Analysis | HTML Table | Forces alignment of attributes, preventing hallucinated comparisons. |

Schema.org as a Direct Data Feed

Structured data is the most explicit way to communicate with machines. Schema.org guidelines indicate that FAQPage schema explicitly marks question-answer pairs for structured data extraction by both search engines and LLMs.

• Product Schema: Standardizes attributes like price and reviews in JSON-LD, enabling precise e-commerce fact extraction.

• BreadcrumbList Schema: Establishes page hierarchies, helping LLMs contextualize where a piece of content sits within your broader site architecture.

• AggregateRating: Provides numeric trust signals that LLMs frequently cite when making recommendations.

Accessibility Standards and Linguistic Clarity

An unexpected but highly effective framework for LLM optimization is the W3C Web Content Accessibility Guidelines (WCAG).

W3C Guidelines Meeting AI Needs

W3C standards recommend short paragraphs (under 100 words) for human scannability, which perfectly aligns with LLM parsing efficiency. Furthermore, W3C defines alt text for media as essential for text-based parsing. Since most text-based LLMs cannot "see" images directly without multi-modal processing overhead, descriptive alt text serves as the primary data source for visual content.

Eliminating Ambiguity and Pronoun Traps

Language ambiguity is the enemy of LLM accuracy. According to Fibr AI's 2026 best practices, transitional phrases like "as a result" explicitly signal causal links that LLMs parse for reasoning chains.

More importantly, avoid ambiguous pronouns. Instead of writing, "This makes it faster," write, "This semantic chunking makes the parsing process faster." Explicit noun repetition reduces the risk of the LLM losing track of the subject across a long context window.

A 30-Day Workflow for AI Content Optimization

Implementation requires a systematic approach. Here is a proven workflow to retrofit your existing content.

Auditing Existing Content

We must also consider why is EEAT important for AI SEO when evaluating our pages. High-quality formatting amplifies your expertise.

- Identify Top Pages: Select the top 50 pages driving traffic but losing ground to AI overviews.

- Convert Prose to Tables: Scan for any comparative text and convert it to Markdown or HTML tables.

- Implement FAQ Schema: Add 3-5 direct Q&A pairs at the bottom of the article, wrapped in FAQPage JSON-LD.

- Chunk the Text: Break any paragraph over 75 words into smaller, distinct concepts.

Measuring Retrieval and Citation Success

According to Averi.ai workflows, teams auditing 50 pages saw a 25% uplift in AI overview snippets after adding Q&A sections and tables. You can measure this by tracking your inclusion rate in Google's AI Overviews and monitoring referral traffic from AI platforms like Perplexity.

Frequently Asked Questions (FAQ)

How long should paragraphs be for LLMs?

Paragraphs should be concise, ideally between 40 to 70 words, or roughly 3 to 5 sentences. This ensures that each paragraph contains a single, isolated idea, which prevents token blending and improves the AI's ability to extract specific facts without pulling in irrelevant context.

Does structured data guarantee citations?

No, structured data does not guarantee citations, but it significantly increases the probability. JCT Growth FAQs note that while schema boosts entity recognition, it is not strictly required for basic optimization. However, it acts as a highly efficient data feed that reduces the computational effort required for an LLM to verify your facts.

What formats are best for how-to content?

For instructional content, numbered lists are mandatory. You should also include a summary table of requirements (e.g., tools needed, estimated time) at the very beginning of the article. This "upfront answer" placement satisfies the LLM's preference for early, extractable facts.

Should I avoid pronouns entirely?

You do not need to avoid pronouns entirely, but you must avoid ambiguous pronouns. If an LLM has to look back three sentences to figure out what "it" refers to, you risk hallucination. Always favor explicit nouns in technical or factual explanations.

Conclusion

Key Takeaways Recap

Formatting content for LLM readability is no longer an experimental tactic; it is a foundational requirement for digital visibility in 2026. By prioritizing semantic chunking, strict heading hierarchies, and the liberal use of tables and lists, you provide AI models with the exact structural blueprints they need to parse, understand, and cite your work.

Your Next Steps with Nuwtonic

At Nuwtonic, we automate the technical heavy lifting of AI SEO. Our platform analyzes your existing content, identifies ambiguous structures, and recommends the exact formatting changes needed to boost your AI citation visibility. Start auditing your content today, replace your dense paragraphs with structured data, and watch your presence in generative search engines grow.